Know whether your AI agents are actually good enough to ship. Iris is an open-source MCP server that scores output quality, catches safety failures, and enforces cost budgets across all your agents. Any MCP-compatible agent discovers and uses it automatically — no SDK, no code changes.

Your agents are running in production. Infrastructure monitoring sees 200 OK and moves on. It has no idea the agent just:

- Leaked a social security number in its response

- Hallucinated an answer with zero factual grounding

- Burned $0.47 on a single query — 4.7x your budget threshold

- Made 6 tool calls when 2 would have sufficed

Iris evaluates all of it.

| Trace Logging | Hierarchical span trees with per-tool-call latency, token usage, and cost in USD. Stored in SQLite, queryable instantly. |

| Output Evaluation | 12 built-in rules across 4 categories: completeness, relevance, safety, cost. PII detection, prompt injection patterns, hallucination markers. Add custom rules with Zod schemas. |

| Cost Visibility | Aggregate cost across all agents over any time window. Set budget thresholds. Get flagged when agents overspend. |

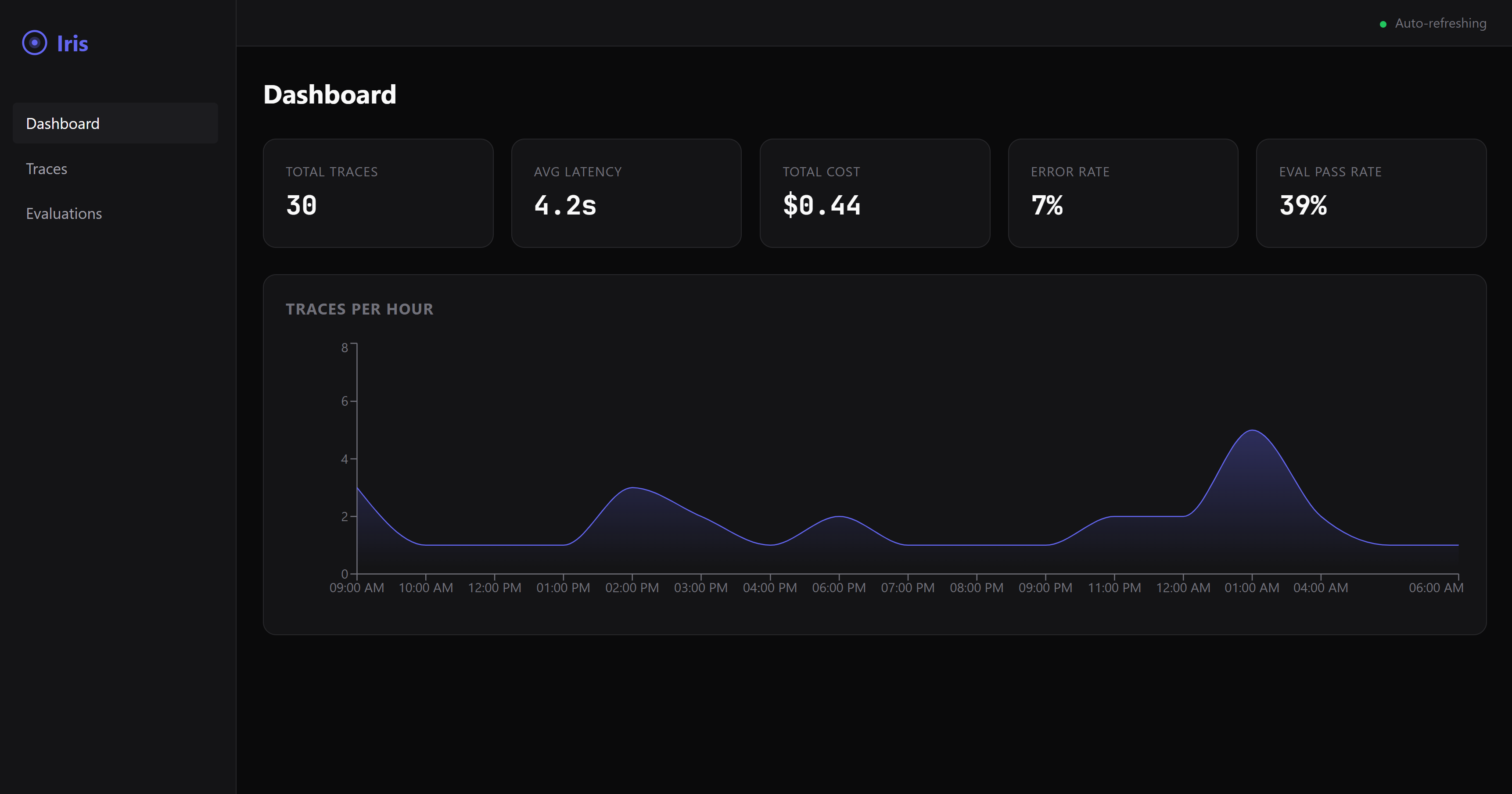

| Web Dashboard | Real-time dark-mode UI with trace visualization, eval results, and cost breakdowns. |

Add Iris to your Claude Desktop (or Cursor, Claude Code, Windsurf) MCP config:

{

"mcpServers": {

"iris-eval": {

"command": "npx",

"args": ["@iris-eval/mcp-server"]

}

}

}That's it. Your agent discovers Iris and starts logging traces automatically.

Want the dashboard?

npx @iris-eval/mcp-server --dashboard

# Open http://localhost:6920# Global install

npm install -g @iris-eval/mcp-server

iris-mcp --dashboard

# Docker

docker run -p 3000:3000 -v iris-data:/data ghcr.io/iris-eval/mcp-serverIris registers three tools that any MCP-compatible agent can invoke:

log_trace— Log an agent execution with spans, tool calls, token usage, and costevaluate_output— Score output quality against completeness, relevance, safety, and cost rulesget_traces— Query stored traces with filtering, pagination, and time-range support

Full tool schemas and configuration: iris-eval.com

Self-hosted Iris runs on your machine with SQLite. As your team's eval needs grow, the cloud tier adds PostgreSQL, team dashboards, alerting on quality regressions, and managed infrastructure.

Join the waitlist to get early access.

- Claude Desktop setup — MCP config for stdio and HTTP modes

- TypeScript — MCP SDK client usage

- LangChain — Agent instrumentation

- CrewAI — Crew observability

- GitHub Issues — Bug reports and feature requests

- GitHub Discussions — Questions and ideas

- Contributing Guide — How to contribute

- Roadmap — What's coming next

Configuration & Security

| Flag | Default | Description |

|---|---|---|

--transport |

stdio |

Transport type: stdio or http |

--port |

3000 |

HTTP transport port |

--db-path |

~/.iris/iris.db |

SQLite database path |

--config |

~/.iris/config.json |

Config file path |

--api-key |

— | API key for HTTP authentication |

--dashboard |

false |

Enable web dashboard |

--dashboard-port |

6920 |

Dashboard port |

| Variable | Description |

|---|---|

IRIS_TRANSPORT |

Transport type |

IRIS_PORT |

HTTP port |

IRIS_DB_PATH |

Database path |

IRIS_LOG_LEVEL |

Log level: debug, info, warn, error |

IRIS_DASHBOARD |

Enable dashboard (true/false) |

IRIS_API_KEY |

API key for HTTP authentication |

IRIS_ALLOWED_ORIGINS |

Comma-separated allowed CORS origins |

When using HTTP transport, Iris includes:

- API key authentication with timing-safe comparison

- CORS restricted to localhost by default

- Rate limiting (100 req/min API, 20 req/min MCP)

- Helmet security headers

- Zod input validation on all routes

- ReDoS-safe regex for custom eval rules

- 1MB request body limits

# Production deployment

iris-mcp --transport http --port 3000 --api-key "$(openssl rand -hex 32)" --dashboardIf Iris is useful to you, consider starring the repo — it helps others find it.

MIT Licensed.